At the Centers for Medicare & Medicaid Services (CMS)—a large, mature enterprise comprising diverse contracting teams—there was no consistent way to gather and act on customer feedback, despite CMS leadership depending on fragmented data from each vendor.

The absence of standardized listening practices meant CMS couldn’t systematically understand user needs or correlate technical issues with user experience. Efforts were disjointed, reactive, and lacked the context needed for meaningful improvement.

Qualitative and quantitative research needs to be thoughtfully and consistently applied. As the Venn diagram describes, the "voice of the customer" is not always represented in many business roadmaps, so I want to tell the story of setting up a process of collecting customer feedback in a large enterprise setting.

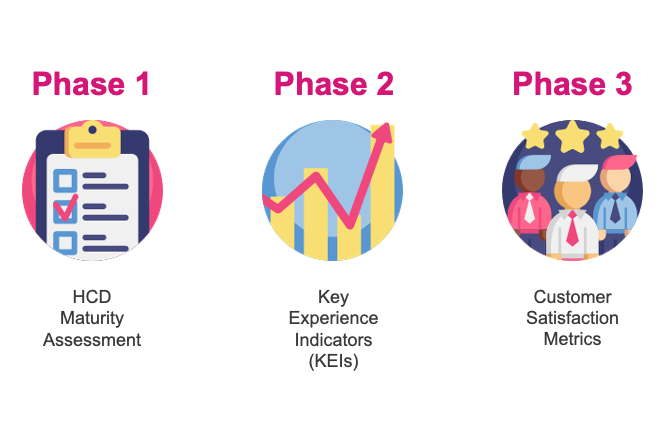

I spearheaded a three-phase initiative grounded in human-centered design:

The program is now operational, laying foundational baselines and enabling CMS’s IT leadership to identify trends, prioritize changes, and course-correct midstream. Though early, the initiative has already fostered a culture of continuous improvement and positions the organization to use both qualitative and quantitative feedback for impactful decisions.

By embedding user feedback loops across digital touchpoints, we shifted from reactive fixes to proactive experience management. This effort strengthened collaboration between vendors and stakeholders, transformed feedback into a strategic asset, and catalyzed CMS’s journey toward more user-centric, data-informed design.